Business intelligence (BI) is growing to become a significant determinant of whether a company remains competitive or is swallowed up by the competition. BI refers to collecting, analyzing, and visualizing data on competitors’ strategies and other factors affecting business and subsequently deriving insight used to show the way forward. Companies are increasingly modelling their strategies after looking at what their competitors have done, particularly by monitoring their websites.

The data stored in companies’ web servers and the internet, at large, is quite substantial. Although an exact figure does not exist, projections from a 2015 study showed that by 2020, the size of data stored on the internet would be over 40 zettabytes (ZB). For context, 1 ZB equals 1 trillion gigabytes.

Notably, this size is only bound to increase. The more the data, the more the need will arise to analyze it and draw insights. Then again, there’s only so much a human being can do, demonstrating that business intelligence will one day prove cumbersome. This possibility points to the fact that the future of collecting internet data (web scraping) will have to evolve. This future lies with AI web scraping. Let’s see how.

What is web scraping?

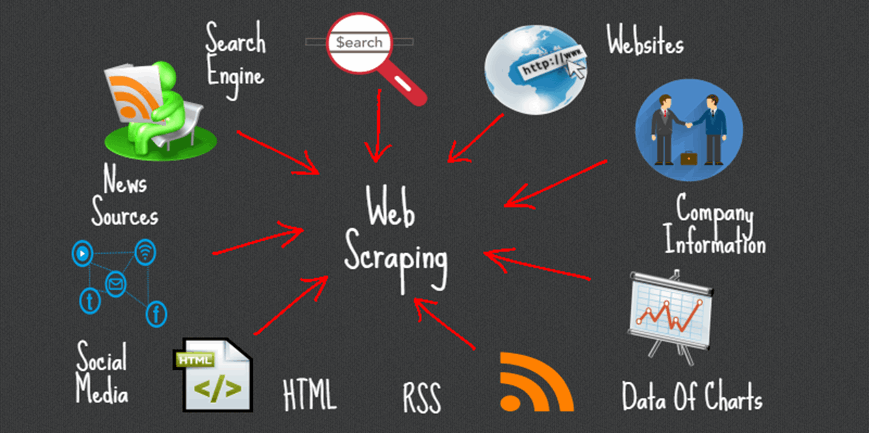

Web scraping, also known as web data harvesting or web data extraction, refers to the automated extraction of data from websites. Although this term also describes the manual act of collecting information, i.e., copying and pasting, it is rarely used in this context. Thus, for this article, web scraping refers to the use of automation in data collection.

Types of web scraping tools

You can scrap the web using various tools, including:

- Ready-to-use web scrapers

- In-house web scrapers

Ready-to-use web scrapers

This type of web scrapers is available for use off the shelf and automatically collects data through a variety of techniques, depending on how they have been created. The automated web scraping techniques include HTML parsing, text pattern matching, XPath, vertical aggregation, and DOM parsing. As a user, you need not know what each of these entails. All you need to do is simply issue instructions in the form of the website you need the scraper to collect data from, and the scraper will get straight to work. You can get AI powered web scrapers from various providers, such as Oxylabs, etc.

In-house web scrapers

Using in-house web scraping tools is more expensive than ready-to-use scrapers because you need developers to create the scraping code from scratch. That said, most in-house web scrapers are designed using Python, which is a relatively easy programming language compared to others. Furthermore, it has multiple request libraries that contain pre written Python code for a particular purpose, in this case, web scraping.

Thus, choosing between ready-to-use and in-house web scrapers depends on your budget and whether you have the human resource required for the latter. That said, both can be applied for both small and large-scale applications. But to use them effectively in large-scale data extraction exercises, you have to deploy rotating proxy servers as well. Rotating proxy servers promote web scraping in the following ways:

- They mask your computer’s real IP address, thus providing the anonymity needed to collect data from websites, keeping in mind that these websites are always ready to blacklist computers, through their IP addresses, on the grounds that they have noticed bot-like activities

- They change the assigned IP address every few minutes, thus mimicking human behavior by ensuring that only a few web requests originate from a single IP address. This is quite effective in promoting smooth web scraping because web scrapers usually send multiple requests to a single web server, which could lead to blacklisting sans proxies.

Nonetheless, the use of web scrapers alongside proxies will ultimately prove ineffective in future, particularly given the increase in information. This is because human players will likely slow down data collection speed besides making the process error-prone. Additionally, the data collected will be minimal. These reasons underscore the importance of AI web scraping.

The future of web scraping

As mentioned earlier, the future of data collection lies with AI web scraping. Artificial intelligence (AI) will deal with the shortfalls of human players in the data collection ecosystem. It will enhance data collection and analysis speeds by automating both the basic and complex tasks, i.e., full automation.

Also Read: 5 Pro Tips for New Website Content Development

Importantly, public data gathering involves managing proxies, web crawling, data fingerprinting, actual data collection, rendering websites, converting them in a structured format for analysis, and more. The size of data available on the internet makes an already complex process even more complicated. Fortunately, the automation that AI provides is a major relief. AI web scraping is adaptable to the ever-changing internet ecosystem and is, therefore, ideal for extracting large volumes of public data.

In the business world, AI-driven web scraping will ease data collection for analysis purposes. It will be a necessity rather than an exception, especially given the expected growth in the volume of data on the internet.